Antino Kim, Indiana University; Alan R. Dennis, Indiana University; Patricia L. Moravec, University of Texas at Austin, and Randall K. Minas, University of Hawaii

Online misinformation has significant real-life consequences, such as measles outbreaks and encouraging racist mass murderers.

Online misinformation can have political consequences as well.

The problem of disinformation and propaganda misleading social media users was serious in 2016, continued unabated in 2018 and is expected to be even more severe in the coming 2020 election cycle in the U.S.

Most people think they can detect deception efforts online, but in our recent research, fewer than 20% of participants were actually able to correctly identify intentionally misleading content. The rest did no better than they would have if they flipped a coin to decide what was real and what wasn’t.

Both psychological and neurological evidence shows that people are more likely to believe and pay attention to information that aligns with their political views – regardless of whether it’s true. They distrust and ignore posts that don’t line up with what they already think.

As information systems researchers, we wanted to find ways to help people discern true and false information – whether it confirmed what they previously thought or not, and even when it came from unknown sources. Fact-checking individual articles is a good start, but it can take days to do, so it usually isn’t fast enough to keep up with how quickly news travels.

We set out to discover the most effective way to present a source’s accuracy level to the public – that is, the way that would have the greatest effect on reducing the belief in, and spread of, disinformation.

Expert or user ratings?One alternative is a source rating based on past articles that gets attached to every new article as it is published, much like Amazon or eBay seller ratings.

The most useful ratings are those a person can use at the most relevant time – finding out about previous buyers’ experiences with a seller when considering making an online purchase, for instance.

When it comes to facts, though, there’s another wrinkle. E-commerce ratings are typically done by regular users, people with firsthand knowledge from using the item or service.

Fact-checking, on the other hand, has traditionally been done by experts like PolitiFact because few people have the firsthand knowledge to rate news.

By comparing user-generated ratings and expert-generated ratings, we’ve found that different rating mechanisms influence users in different ways.

We conducted two online experiments, with a total of 889 participants. Each person was shown a group of headlines, some labeled with accuracy ratings from experts, others labeled with ratings from other users and the remainder with no accuracy ratings at all.

We asked participants the extent to which they believed each headline and whether they would read the article, like it, comment or share it.

A sample headline with a rating from experts, as shown in our experiment. Kim et al., CC BY-ND

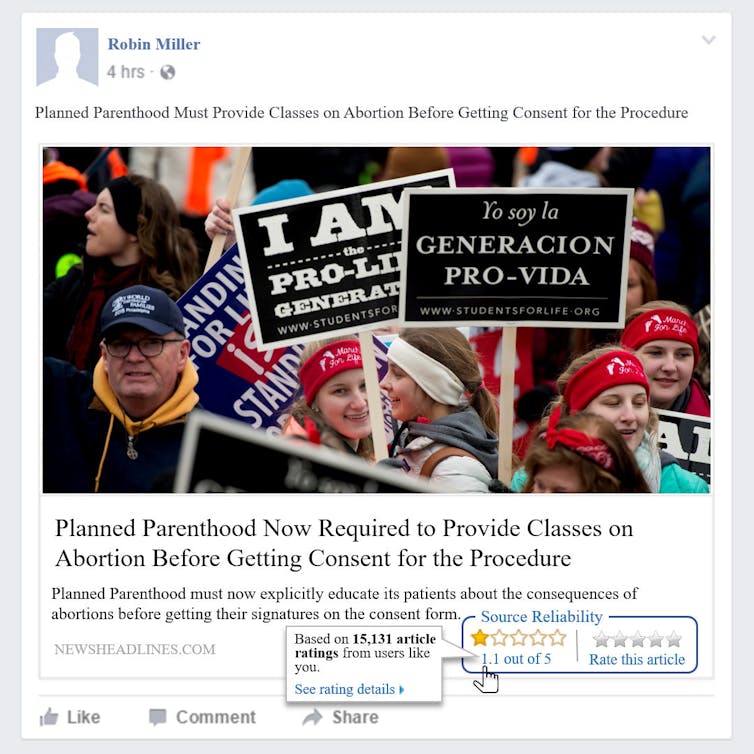

A sample headline with a rating from experts, as shown in our experiment. Kim et al., CC BY-ND  A sample headline with a rating from other users, as shown in our experiment. Kim et al., CC BY-ND

A sample headline with a rating from other users, as shown in our experiment. Kim et al., CC BY-NDExpert ratings of news sources had stronger effects on belief than ratings from nonexpert users, and the effects were even stronger when the rating was low, suggesting the source was likely to be inaccurate.

These low-rated inaccurate sources are the usual culprits in spreading disinformation, so our finding suggests that expert ratings are even more powerful when users need them most.

Respondents’ belief in a headline influenced the extent to which they would engage with it: The more they believed an article was true the more likely they were to read, like, comment on or share the article.

Those findings tell us that helping users mistrust inaccurate material at the moment they encounter it can help curb the spread of disinformation.

Spillover effectsWe also found that applying source ratings to some headlines made our respondents more skeptical of other headlines without ratings.

Facebook tried labeling headlines that were of dubious accuracy, but it didn’t help curb the spread of disinformation. Kim et al.

Facebook tried labeling headlines that were of dubious accuracy, but it didn’t help curb the spread of disinformation. Kim et al.

What we learned indicates that expert ratings provided by companies like NewsGuard are likely more effective at reducing the spread of propaganda and disinformation than having users rate the reliability and accuracy of news sources themselves. That makes sense, considering that, as we put it on Buzzfeed, “crowdsourcing ‘news’ was what got us into this mess in the first place.”

[ Expertise in your inbox. Sign up for The Conversation’s newsletter and get a digest of academic takes on today’s news, every day. ]

Antino Kim, Assistant Professor of Operations and Decision Technologies, Indiana University; Alan R. Dennis, Professor of Internet Systems, Indiana University; Patricia L. Moravec, Assistant Professor of Information, Risk and Operations Management, University of Texas at Austin, and Randall K. Minas, Associate Professor of Information Technology Management, University of Hawaii

This article is republished from The Conversation under a Creative Commons license. Read the original article.